By Kenneth Henseler, 20-FEB-2026

If you spend enough time scrolling through Instagram or TikTok, you are bound to encounter highly alarming statistics about the environmental impact of artificial intelligence. Recently, a reel posted by the user ‘bizbrat’ went viral, featuring a dark, ominous video of an industrial grate accompanied by a startling text overlay: “800 BILLION litres of fresh water is being used in a single DAY to cool down systems across the world, concerning or not?”

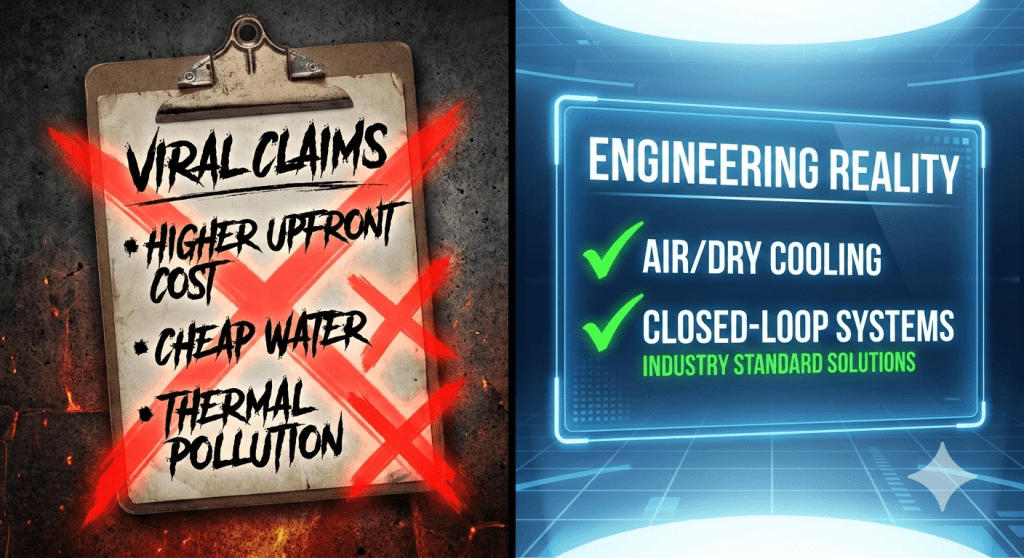

The caption went further, claiming that 11 trillion liters of water are used for this purpose overall, and alleging that companies refuse to use “Air/dry cooling” or “Closed-loop systems” because of “Higher upfront cost” and “Water is cheap & under-regulated.” Most alarmingly, the post claimed that hot water is routinely dumped into water bodies, killing organisms and causing severe “thermal pollution.”

To understand why this video exists, we have to look at the digital economy. In 2025, Oxford University Press named “rage bait” as its Word of the Year.[1] Defined as online content deliberately engineered to provoke anger, frustration, or moral outrage to artificially inflate engagement, the usage of the term tripled as the digital landscape became increasingly charged.[1] The claims in this specific video are a textbook example of this phenomenon—taking fragmented, outdated concepts and presenting them as modern crises to harvest outrage for algorithmic profit.[2]

The most egregious claim in the reel’s caption is the idea of “thermal pollution”—the assertion that “hot water is sometimes put into water bodies which kills many organisms.” While thermal pollution is a legitimate historical and regulatory concern for mid-century nuclear or coal power plants that utilize open-loop river cooling, modern enterprise data centers operate under entirely different engineering paradigms.

Furthermore, the irony of the video is that the exact solutions it demands—air/dry cooling and closed-loop systems—are already the standard for high-tier enterprise infrastructure.

To ground this in reality, we can look at the NTT Global Data Centers TX1 facility in Garland, Texas. This 230,000-square-foot fortress supports 16 Megawatts of critical IT load.[3] Does it evaporate billions of liters of water daily? No. The official specifications of the TX1 facility explicitly state that it utilizes “waterless cooling using indirect air exchange cooling technology” driven by 74 total rooftop cooling units.[4]

As artificial intelligence pushes server rack power densities from standard 10kW loads up to 100kW or even 200kW, the industry is shifting toward liquid cooling.[5] However, these are fundamentally closed-loop systems. Whether utilizing Direct-to-Chip cold plates or full immersion cooling, the liquid is sealed within the system.[6] These liquid systems are highly sustainable, capable of reducing data center energy consumption by over 60% and up to 95% in optimized setups.[7]

The technology to run massive computational loads sustainably doesn’t just “exist” as a hypothetical—it is currently powering the global digital economy. The next time a viral video tries to tell you the internet is boiling the oceans, remember that outrage is free, but good engineering is a closed loop.

🍎 Podcasts: https://podcasts.apple.com/us/podcast/the-chronos-archive/id1831231439?i=1000750756195

Sources Cited:

- Oxford Word of the Year 2025: Rage Bait [1]

- NTT Global Data Centers TX1 Specifications [5, 4]

- The Mechanics of Kyoto Cooling [6, 7]

- Liquid vs. Air Cooling in High-Density AI Data Centers [8, 9]

- Understanding Data Center Water Consumption [2, 3]

You must be logged in to post a comment.