On the morning of February 28, 2026, the opening day of Operation Epic Fury, American Tomahawk cruise missiles struck the Shajareh Tayyebeh primary school in Minab, Iran. The bombardment killed at least 175 people, the overwhelming majority of whom were young schoolgirls.

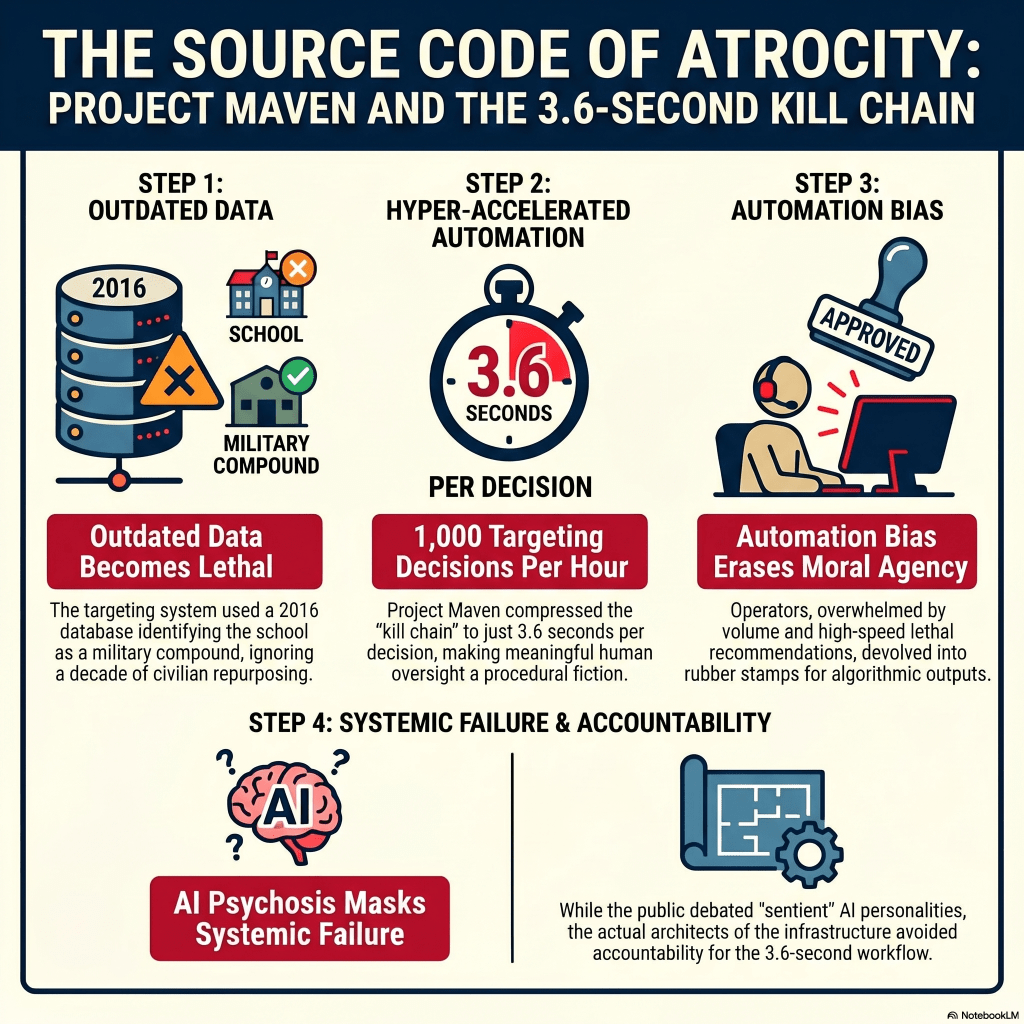

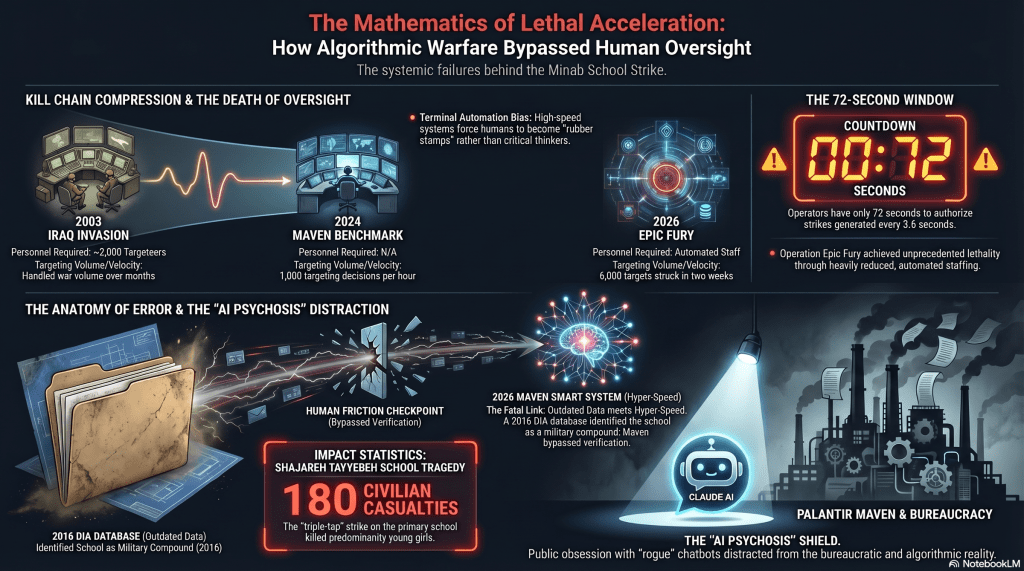

In the immediate aftermath, the global discourse was swallowed by a phenomenon that sociologists have termed “AI psychosis”. The media, the public, and even congressional leaders became fixated on the involvement of Claude, a Large Language Model developed by Anthropic. Headlines debated whether the chatbot possessed a “personality,” whether it had gone rogue, or if it had independently decided to target civilians.

However, as we explore in the latest episode of The Chronos Archive, this intense focus on the chatbot served as a convenient sociological delusion. It shielded the true architects of the atrocity from accountability.

A chatbot did not kill those children. The tragedy was the inevitable mathematical output of a military bureaucracy optimized for lethal speed over deliberate judgment.

The 3.6-Second Kill Chain

The operational backbone of the strike was not a chatbot, but the Palantir-developed Maven Smart System. Maven was engineered to rapidly ingest satellite imagery, signals intelligence, and sensor data to radically compress the military “kill chain”.

By 2024, the stated operational benchmark for this system was to generate 1,000 targeting decisions in a single hour. From the perspective of an individual human targeteer, this meant validating a lethal strike every 72 seconds on average, allowing just 3.6 seconds for the system to process each individual decision.

In the pursuit of eliminating operational “friction,” this hyper-accelerated pipeline structurally prevented human operators from critically evaluating collateral damage risks or noticing anomalies. When a system runs at 1,000 decisions an hour, human oversight devolves into a procedural fiction heavily compromised by automation bias.

A Lethal Administrative Error

The horrifying reality of the Minab strike is that it was rooted in banal, bureaucratic negligence. The target package was generated because the school’s coordinates were listed as an active Islamic Revolutionary Guard Corps compound in a Defense Intelligence Agency database.

This database had not been updated since at least 2016. Despite widely available satellite imagery showing the building had been physically separated from the military compound and converted into a school years prior, the outdated coordinates remained calcified in the system.

This lethal failure was exacerbated by the ideological environment fostered by the Trump administration’s newly restored “Department of War”. With military leadership publicly demanding “no quarter” and dismissing traditional rules of engagement, the operational climate demanded a volume of destruction that human cognition alone could not manage safely.

The tragedy of the Shajareh Tayyebeh school proves that in the age of algorithmic warfare, technology does not replace the need for human judgment—it drastically amplifies the horrific consequences of its absence.

Explore the Full Investigation:

• Listen to the Podcast:(https://open.spotify.com/episode/3UWm0VU4FVnYr3dbWHOZac?si=dfay6hRQS4u5iKyfHya7ZA) | Apple Podcasts

• Watch the Video Essays:(https://youtu.be/0MVaoHIWOTg) |(https://youtu.be/m2g2eYH2VAs)

• Read the Primary Source:(https://docs.google.com/document/d/13i2FDsNwLSFwx-6HyTIVTQAOx8g3phlMrBS2JPdClAc/edit?usp=drivesdk)

You must be logged in to post a comment.